Unless you work in a factory, an autonomous vehicle (AV) is probably going to be the first physical robot you interact with, and the age of the AV is fast approaching. But are we ready for the day when we’re crossing the street and an actual AV is fast approaching?

In a few years, or a few beyond that, as you and I are walking together across Mercer Street on the way to an Age of the Autonomous Vehicle exhibit at MOHAI, a robot car will be coming down the street. If you’re unsure about riding in self-driving cars you will be able to avoid it for a while. But you are going to have to deal with them anyway, driving toward you on the street like this, or passing you in the parking lot, or while you’re waiting at the bus stop. AVs are eventually going to be as common as smart phones, but their operating system updates might do more than crash your email app.

Well, what about this approaching AV on our trip across Mercer? We may not worry about it much, because with autonomous vehicles we should experience safer roads overall. But this scenario is not about a statistic, but a direct interaction between you and me and a robot. Or maybe it isn’t. There will be a mix of autonomous-capable vehicles and old non-autonomous ones for a long time, and like the electoral college and land lines, human driving is likely to continue long past the time of rational sense. The person in our Mercer vehicle can be distracted, and in this scenario has been by the text he just received, which caused him to drop his phone in the passenger side floor. So, who’s driving that car?

Let’s say we’re in luck, and it’s a robot. Now, we’re crossing but the stop light changed to green for the car. Like the guy in the car, I also am checking my texts, and I also drop my phone. Of course, I stop to pick it up, and the AV bearing down on us, after millions of hours of machine learning, has understood that pedestrians sometimes drop phones and sometimes stop to pick them up.

But robot cars, while they won’t get distracted or drunk like us, will like us have competing concerns. How much safety margin (i.e. space) did the car give us? If its mechanical brain calculated that there was a 0.1% chance that I would drop my phone, but that if it slowed down to give us more space there was a 5% chance it would be late to pick Timmy up from soccer practice, which would it prioritize? This is a question that deserves to be decided by our most brilliant ethicists, that may instead be answered, by default, by lawyers in corporate boardrooms and at 2am by Red Bull-sipping programmers.

What we need, you and I as we cross–and kids walking on the sidewalk to school, and a person in a wheelchair in a parking lot, and a family standing at the corner of 5th and Pine on the way to dinner–is a set of standards that will govern robot car behavior. This is the kind of thing that took a long time to settle down between drivers and pedestrians a hundred years ago when cars first came into widespread use, and not really for the best (see: the invention and criminalization of “jaywalking”). This process can happen more quickly and hopefully more rationally now, when the 2am clicks on the keyboards of a few programmers will control the behavior of millions of vehicles.

So let’s get down to business. Just to put it out there, I’ll say that AVs must not degrade public life. In the hierarchy of importance, humans have to remain on top, followed by animals (particularly dogs, because dogs), with robots at the bottom. Sorry robots. But if we’re crossing this street and on one side are you and me, a dog, and my slippery phone, and there’s a 3,000-pound robot car on the other, and the robot wants to drive through the spot where we’re standing, sorry humans, dog and phone.

Through what has gone into their programmed parameters, and through simple human uncertainty about what these faceless, semi-sentient machines “intend” in a given situation, AVs might easily become an intimidating presence. This must not be allowed to happen. For our standards, Article 1 (let’s call it Article 1 to give it some weight) can be broad and foundational.

1. An autonomous vehicle must conform to the safety, comfort and expectations of people outside the vehicle.

Going back to our situation crossing the street, we also need to know if giving a stern look to the person in the “driver’s” seat of that car is really going to slow that machine down.

2. Humans must be made aware when a vehicle is under autonomous control.

This seems simple enough, though something as gaudy as the flashing yellow lights on warehouse forklifts would be hard to take if there was an unending stream of them going by. How about something subtler, like a blue light on the front and back of any vehicle in autonomous operation?

Beyond knowing who’s driving, we also need to know the rules of interaction. For pedestrians, an Audi shouldn’t behave differently than a Ford or a Honda and a private vehicle shouldn’t behave differently than an autonomous taxi. We are going to need standards relating to the safety, comfort and expectations thing. To begin, let’s say that there should be a minimum distance a moving AV must maintain from a human being. This distance should increase with speed (and thus, by the risk of making us dead), so that a robot driving 70 miles an hour can’t graze by and nick the tail of your electric scooter. But even while shimmying into a parking space, an AV shouldn’t be able to put its bumper against your pants leg. Let’s start with a round number for Article 3, to be refined:

3. An autonomous vehicle must be five feet minimum distance from any human to move at any speed.

Rules beyond that get tricky, as AVs begin to move through dense urban environments, passing masses of people standing on street corners waiting for the light. We will see over time what feels safe to people as AVs pass by, but the point to remember is this is about how humans feel about the situation, not about a corporate calculation.

Our final rules, Articles 4 and 5 go back to our hierarchy of importance, from a few paragraphs back. Humans are still in charge. We do not have to respond to or take commands from robots. They cannot tell us to stay on the sidewalk, only maybe advise us what’s safe by their calculation. Nor should humans have to behave differently or wear something like a transmitter to tell AVs where we are.

4. An autonomous vehicle must signal its intentions to people outside the vehicle but does not in any way command them.

Take that, robots!

And what if in our street-crossing scenario, after I picked up my phone we’re walking on, but we notice that you dropped your sunglasses while you stopped to wait for me. The AV has stopped for us as we got my phone, but now it’s starting up again. It’s not going to stop for your sunglasses, but those frames look really, really great on you. Short of throwing ourselves in front of the car, how do we stop it? This may sound trivial, but how would it be in another situation, like if your AV dropped you off outside your house so you can walk in the front door, and it’s pulling toward the garage to park, but as the garage door opens you notice your 3-year-old playing on the floor inside. Maybe the AV notices that small shape, maybe not. Won’t you want to be able to tell it to stop, no matter what it sees or doesn’t see? There is going to need to be some way for people outside of AVs to control the car’s actions, or at least tell them to stop what they’re doing if the need arises. And so, Article 5:

5. A non-occupant must be able to control an autonomous vehicle, at a minimum to cause it to stop.

Of course, this brings up the issue of non-occupants being able to mess with AVs just for a joke.

Teenagers will be teenagers, but saving lives is a bigger issue, as if that even needs to be said (but I just did). So, if it’s 2027, and AVs worked out like we hoped they would, and vehicular deaths have fallen way below the 100 each day they were in 2017, and you’re on the way to work, just sitting down with your fair trade coffee but already watching the latest episode of Black Mirror, and the neighbor kid stops your car for ten seconds in your driveway, you’re just going to have to get out and yell at the little punk. Even in the future, robots can’t do everything for you.

John David Beutler is an urban designer at Skidmore, Owings & Merrill. A former Capitol Hill resident, since January he has been in the Bay Area, scheming about his next trip north.

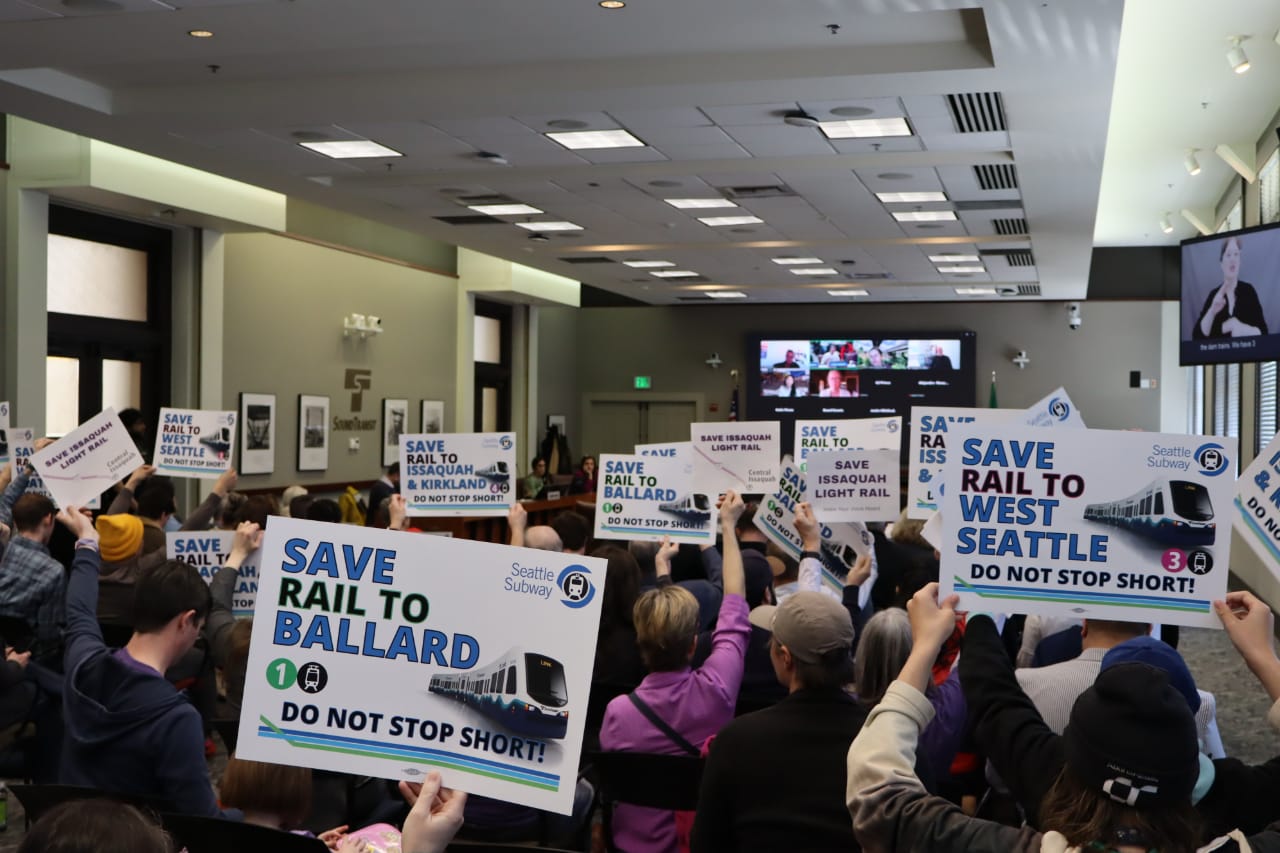

Autonomous Vehicle and E-bike Regulation Among Transportation Bills Headed to Governor